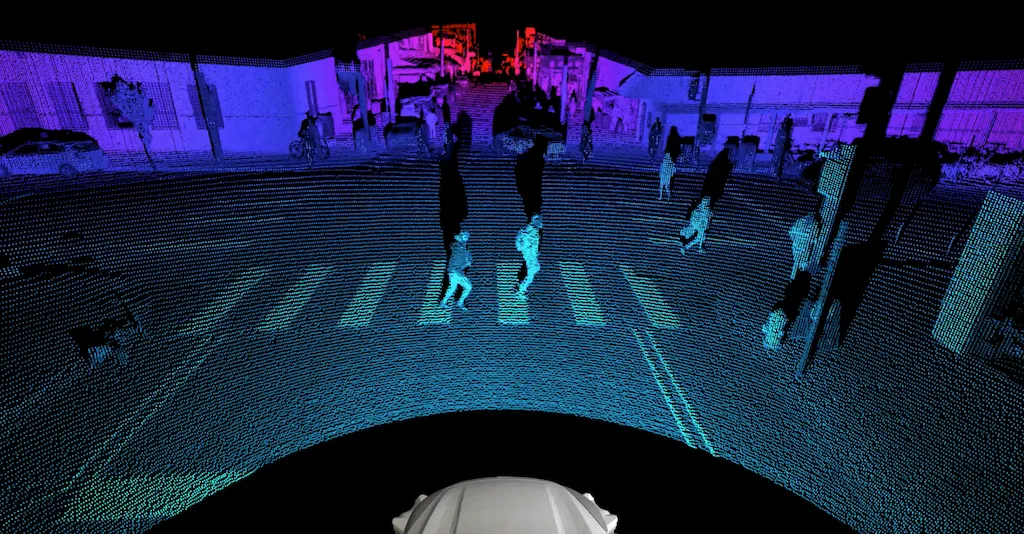

Researchers at Carnegie Mellon University’s Argo AI Center for Autonomous Vehicle Research, a private-public partnership funded by Argo for advancing the autonomous-vehicle (AV) field, say they have come up with a way to use lidar data to visualize not just where other moving objects are currently on the roads — but where they are likely to be several seconds into the future, including multiple possible future paths these objects could take.

Lidar is an incredible tool for AVs. Short for “light imaging, detection and ranging,” the basic concept behind lidar is relatively straightforward and was first attempted in a rudimentary fashion by engineers in the 1930s with searchlights — shine beams of light outward from a source, measure how much light bounces back after it reflects off of other nearby objects, record how long it takes for the light to bounce back, and then use these measurements to understand exactly the surrounding world.

Over the last two decades, lidar with lasers that are safe and invisible to human eyes has become popular across all sorts of industries, especially in the AV sector. Numerous leading companies such as Argo AI mount lidar sensors on top of and around their AVs to image their surroundings as they drive autonomously down roads with traditional traffic. At Argo, this data is combined with the imagery from video cameras and radar in a process called “sensor fusion,” to get a richer, more detailed and more accurate view of the world than would be possible using just one type of sensor.

“This method basically takes in lidar measurements and spits out the possible future locations of objects”

Carnegie Mellon researchers and Argo engineers say their new method, called “FutureDet”, will give AVs the ability to look at a lidar point cloud — the dotted image of the world surrounding an AV — and see not just where the other cars and pedestrians are now, but where they will be in the near feature (three seconds).

Their method is described in a paper being presented at the 2022 Conference on Computer Vision and Pattern Recognition (CVPR) in the Workshop for Autonomous Driving taking place in New Orleans.

“This method basically takes in lidar measurements and spits out the possible future locations of objects,” says Dr. Deva Ramanan, the head of the Argo CMU Center for Autonomous Vehicle Research, Argo Principal Scientist, and supervising researcher on the paper.

Other co-authors on the FutureDet paper include Argo Principal Scientist and Technical University of Munich professor Laura Leal-Taxié, Technical University of Munich researcher Aljoša Ošep, CMU researcher Neehar Peri, and Jonathan Lutien from RWTH Aachen University.

FutureDet differs from other previous computer science techniques for handling lidar data, which take a more “modular” approach. In those other methods lidar data passes through several distinct modules, or groupings, of other computer programs before it can influence what driving actions an AV will take.

For example, lidar data comes into an AV’s computer systems and passes through modules including detection, motion tracking (computer programs that can follow the path of moving objects to ensure an AV steers clear of them), and forecasting (using a moving object’s previous path to anticipate where it is likely to be in the future so the AV can be ready to move safely around it). Lidar data is combined with other types of data in each module and then ultimately this combination of data is used by the self-driving system to chart its course.

In contrast to this type of modular approach, FutureDet can be seen as a step toward “end-to-end,” meaning lidar data comes into an AV’s computers on one end, and a driving instruction comes out the other end. However, such “black-box” end-to-end approaches may be difficult to interpret and analyze. Instead, FutureDet processes the lidar data to understand the world and how it will evolve, leaving the decision of where the AV should and shouldn’t drive to a downstream motion planner.

The other significant innovation of FutureDet is that it predicts multiple future paths of moving objects. To do so, FutureDet repurposes spatial “heatmaps” that can reason about the current locations of multiple moving objects detected with lidar in the present, and applies them to the task of estimating multiple object locations in the future.

Why is this helpful? Because it allows the lidar detection system on its own to be able to react to future events, without requiring separate modules. Because the lidar system is already built to detect where objects are around it at present, FutureDet provides it with the same kind of object data, only for where the objects are likely to be in the future.

To put it another way: When we human beings see a car driving down the street toward us with its right turn signal on, we expect the car will soon turn right. But the way FutureDet works, it predicts a spatial heatmap of the car turning right already, and asks the AV’s motion planning and control system to decide how the AV should respond to that action.

Using the moving object’s current position, velocity, and trajectory, FutureDet creates not just one but several of these possible future paths in lidar, ranks them by the confidence it has that the moving object will follow through with said path, and shows all of these paths to the AV as if they were occurring in real-time, then sees how the AV plans to respond to each.

“Essentially, we take a moving object’s previous one second of lidar data and give it to a neural network, and ask it where the object will be next, and it gives us three or four possible paths,” Ramanan says.

A neural network is a type of artificial intelligence program that “learns” about subjects by analyzing many previous examples — in these case, hundreds of thousands of four-second-long clips of lidar recordings from AVs driving down the street, in which the other objects around the AV have been pre-labeled by human analysts according to their proper-categories — “other vehicles,” “cyclists,” “pedestrians,” and more. The neural network learns to recognize these distinct objects and categorize them, and then can recognize them when it sees new versions of them out on the roads or in a simulated computer program version of the roads.

FutureDet is still an early stage method and will need to be further studied in computer simulations before it can be tested on any real world AVs on test tracks or public roads.

However, it has already been tested on open-source lidar data from the AV company Motional’s NuScenes dataset, available freely online, and will soon be tested on lidar data from Argo’s Argoverse open source dataset as well. In these initial tests, it actually achieved 4% greater accuracy in forecasting the future paths of objects that weren’t moving in straight lines, compared to previous methods.

“That is definitely the goal of all this research, to develop new methods that we can ultimately put on the AVs that improve accuracy, reduce the amount of code we’re running, and give us even more efficiencies in processing power and energy,” says Ramanan.